- Role

- Founder & Builder

- Product

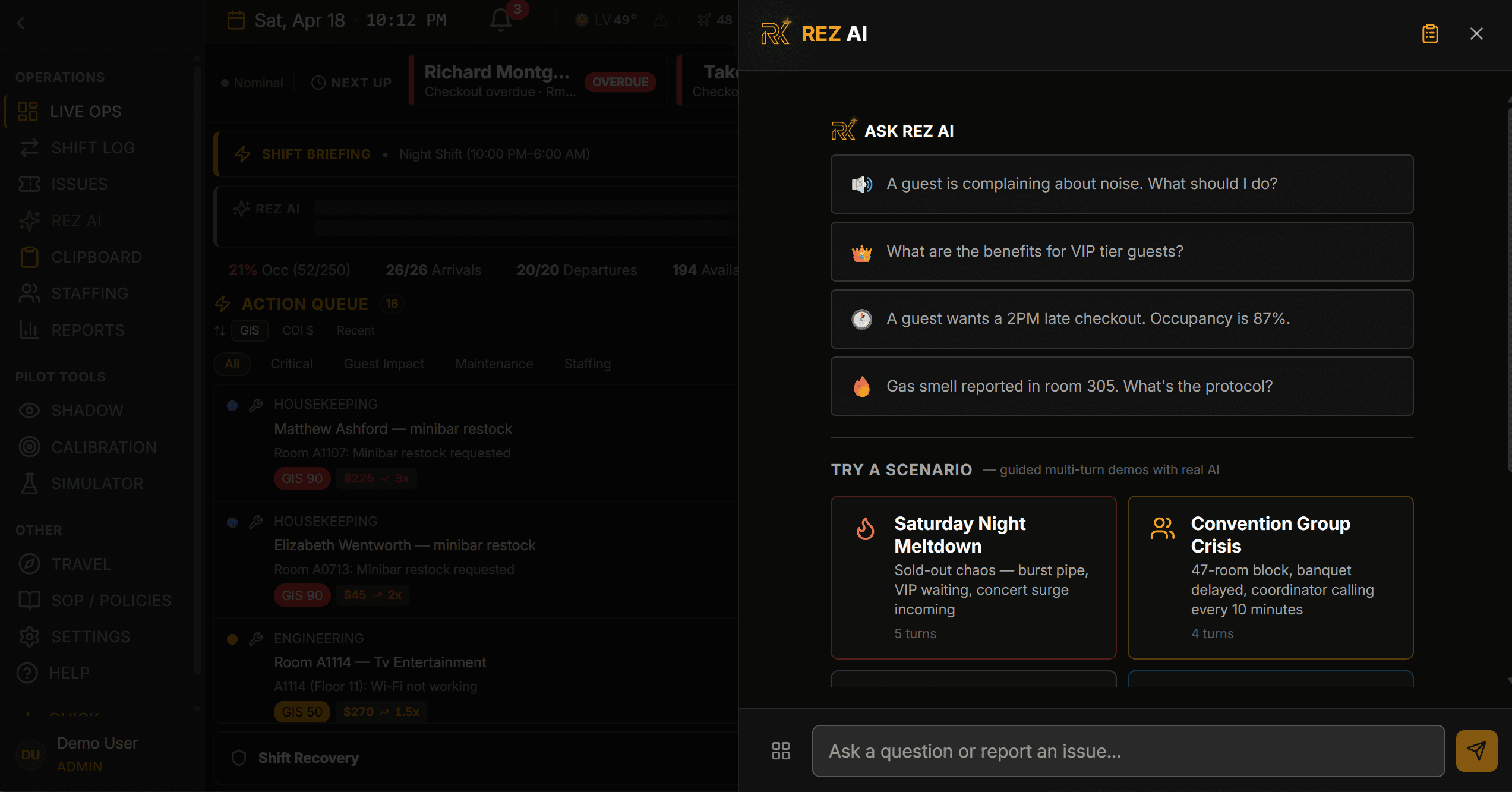

- AI decision-support system for frontline hospitality operations

- Stack

- Python, FastAPI, Pydantic, PostgreSQL, pgvector, Claude API, Vite + React + TypeScript, Railway, Supabase Edge Functions

- Architecture

- 300+ REST endpoints · 69 tables · 328 RLS policies · RAG via pgvector

- Result

- Custom evaluation pipeline improved safety-oriented pass rate from 42% to 84% across synthetic test scenarios.

Challenge

Enterprise hospitality operations are highly manual behind the scenes. Frontline managers often have to pull together information scattered across multiple systems just to answer simple but high-stakes questions: Which guest issues need action first? What can be solved now versus escalated? What is the safest next step when systems are incomplete or inconsistent?

This is exactly where generic AI wrappers break down. A model can generate plausible language, but plausible language is not the same as operationally safe action. In complex hospitality operations, the wrong recommendation can create guest-facing problems, staff confusion, or actions that legacy systems cannot reliably support. Reztrix was designed around that constraint from the start: use AI to accelerate understanding, but keep execution grounded, reviewable, and human-approved.

Build Decisions

Reztrix combines operational data, retrieval, and AI-assisted reasoning into a single decision-support workflow for frontline use. At a system level, the product does four things:

- Aggregates operational context spread across issues, requests, and status signals.

- Retrieves relevant context so outputs are grounded in the right operational evidence.

- Generates recommended actions rather than free-form unbounded answers.

- Routes those recommendations through human review before anything consequential happens.

- Guest Impact Score (GIS): a transparent weighted prioritization algorithm (Tier 35% + Severity 30% + Timing 20% + Scope 15%) that replaces opaque triage estimates with auditable, explainable scoring.

This was not built as a prompt demo. It is a full-stack software system with a FastAPI backend, a structured PostgreSQL data model, a retrieval layer, and an evaluation workflow designed strictly around enterprise constraints.

Inside Reztrix

Captured against synthetic fixtures — no real guest data. Click any frame to enlarge.

Architecture and Technical Decisions

On the backend, Reztrix uses a FastAPI application layer with a PostgreSQL data model and a pgvector-powered retrieval layer. The goal was to create a stack that was fast to iterate, inspectable, and grounded.

- Secure, Scoped Access: The data model and access controls (including Row-Level Security policies) were designed to keep operational data appropriately segmented and reviewable.

- Model Benchmarking: Benchmarked Claude against GPT-4o on JSON schema adherence, output consistency, and latency, ultimately selecting the Claude API based on structured-output reliability and lower cost per inference.

- Retrieval Before Recommendation: The system uses RAG to improve grounding and reduce unsupported outputs. I embedded 43 Standard Operating Procedures (SOPs) into 226 vector chunks to anchor recommendations in approved operational policies rather than model guesswork.

Outcome Evidence

AI systems in operations should not be evaluated only on whether a response sounds helpful. They need to be evaluated on whether the recommendation is grounded, safe, and usable inside the real workflow.

Because live production data included operational sensitivity, I engineered a synthetic evaluation environment to pressure-test the system without exposing real guest information. I modeled frontline scenarios that reflected the kinds of ambiguity, escalation risk, and incomplete context that managers deal with in practice. From there, I built a custom LLM-as-judge evaluation workflow across 50 targeted test scenarios to ask:

- Did it retrieve and use the right context?

- Did it avoid unsupported or unsafe recommendations?

- Did it escalate appropriately when confidence or authority was limited?

The first passes exposed predictable failure modes: incomplete grounding and overconfident recommendations. I used those failures as product input, iterating on retrieval, prompt structure, and recommendation logic. That systematic root-cause analysis improved the pass rate from a 42% baseline to 84%. Evaluation was not a final QA step after the build. It became part of the product loop itself: define the behavior, test the behavior, identify failure patterns, and redesign the system around safer performance.

- 42% → 84% pass-rate gain across 50 synthetic scenarios.

- 300+ REST endpoints across 32 routers supporting frontline workflow coverage.

- 69 tables with 328 RLS policies to keep operational access scoped and auditable.

Reliability and Trust Notes

Reztrix was built on a simple principle: AI recommends, people decide. In practice, that meant recommendations were surfaced with context and rationale, then held for manager review rather than executed automatically. The interface was designed to make that review step fast and operationally realistic: surface the issue, show the supporting context, recommend a next step, and make approval or escalation explicit. In frontline environments, trust comes from controlled usefulness, not maximum automation.